EP&T: Analog compute is the key to powerful, yet power-efficient, AI processing

by EP&T

The power advantages of analog compute at the lowest level comes from being able to perform massively parallel vector-matrix multiplications with parameters stored inside flash memory arrays.

Analog compute is the key to powerful, yet power-efficient, AI processing

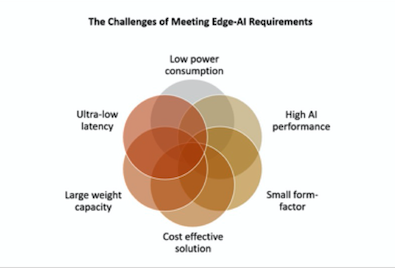

As artificial intelligence (AI) applications are becoming more popular in a growing number of industries, the need for more throughput, more storage capacity and lower power is becoming increasingly important. At the same time, machine learning models are growing at an exponential rate. With these models, traditional digital processors struggle to deliver the necessary performance with low enough power consumption and adequate memory resources, especially for large models running at the edge. This is where analog computing comes in, enabling companies to get more performance at lower power consumption in a small form-factor that’s also cost-efficient.

The power advantages of analog compute at the lowest level comes from being able to perform massively parallel vector-matrix multiplications with parameters stored inside flash memory arrays. Analog compute with flash storage is also referred to as analog compute in-memory. Tiny electrical currents are steered through a flash memory array that stores reprogrammable neural network weights, and the result is captured through analog-to-digital converters (ADCs). By leveraging analog compute for the vast majority of the inference operations, the analog-to-digital and digital-to-analog energy overhead can be maintained as a small portion of the overall power budget and a large drop in compute power can be achieved. There are also many second-order system level effects that deliver a large drop in power; for example, when the amount of data movement on the chip is multiple orders of magnitude lower, the system clock speed can be kept up to 10x lower than competing systems and the design of the control processor is much simpler.

Read the full interview on EP&T Magazine, one of Canadian Manufacturing‘s partner publications.